DerivaML Class

The DerivaML class provides a range of methods to interact with a Deriva catalog.

These methods assume tha tthe catalog contains a deriva-ml and a domain schema.

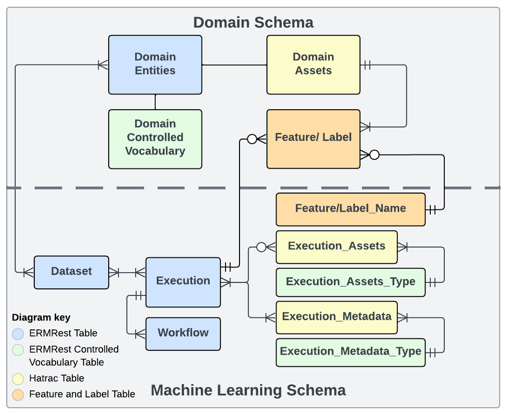

Data Catalog: The catalog must include both the domain schema and a standard ML schema for effective data management.

- Domain schema: The domain schema includes the data collected or generated by domain-specific experiments or systems.

- ML schema: Each entity in the ML schema is designed to capture details of the ML development process. It including the following tables

- A Dataset represents a data collection, such as aggregation identified for training, validation, and testing purposes.

- A Workflow represents a specific sequence of computational steps or human interactions.

- An Execution is an instance of a workflow that a user instantiates at a specific time.

- An Execution Asset is an output file that results from the execution of a workflow.

- An Execution Metadata is an asset entity for saving metadata files referencing a given execution.

Core module for DerivaML.

This module provides the primary public interface to DerivaML functionality. It exports the main DerivaML class along with configuration, definitions, and exceptions needed for interacting with Deriva-based ML catalogs.

Key exports

- DerivaML: Main class for catalog operations and ML workflow management.

- DerivaMLConfig: Configuration class for DerivaML instances.

- Exceptions: DerivaMLException and specialized exception types.

- Definitions: Type definitions, enums, and constants used throughout the package.

Example

from deriva_ml.core import DerivaML, DerivaMLConfig ml = DerivaML('deriva.example.org', 'my_catalog') datasets = ml.find_datasets()

BuiltinTypes

module-attribute

BuiltinTypes = BuiltinType

Alias for BuiltinType from deriva.core.typed.

This maintains backwards compatibility with existing DerivaML code that uses the plural form 'BuiltinTypes'. New code should use BuiltinType directly.

ColumnDefinition

module-attribute

ColumnDefinition = ColumnDef

Alias for ColumnDef from deriva.core.typed.

This maintains backwards compatibility with existing DerivaML code. New code should use ColumnDef directly.

TableDefinition

module-attribute

TableDefinition = TableDef

Alias for TableDef from deriva.core.typed.

This maintains backwards compatibility with existing DerivaML code. New code should use TableDef directly.

DerivaML

Bases: PathBuilderMixin, RidResolutionMixin, VocabularyMixin, WorkflowMixin, FeatureMixin, DatasetMixin, AssetMixin, ExecutionMixin, FileMixin, AnnotationMixin, DerivaMLCatalog

Core class for machine learning operations on a Deriva catalog.

This class provides core functionality for managing ML workflows, features, and datasets in a Deriva catalog. It handles data versioning, feature management, vocabulary control, and execution tracking.

Attributes:

| Name | Type | Description |

|---|---|---|

host_name |

str

|

Hostname of the Deriva server (e.g., 'deriva.example.org'). |

catalog_id |

Union[str, int]

|

Catalog identifier or name. |

domain_schema |

str

|

Schema name for domain-specific tables and relationships. |

model |

DerivaModel

|

ERMRest model for the catalog. |

working_dir |

Path

|

Directory for storing computation data and results. |

cache_dir |

Path

|

Directory for caching downloaded datasets. |

ml_schema |

str

|

Schema name for ML-specific tables (default: 'deriva_ml'). |

configuration |

ExecutionConfiguration

|

Current execution configuration. |

project_name |

str

|

Name of the current project. |

start_time |

datetime

|

Timestamp when this instance was created. |

Example

ml = DerivaML('deriva.example.org', 'my_catalog') ml.create_feature('my_table', 'new_feature') ml.add_term('vocabulary_table', 'new_term', description='Description of term')

Source code in src/deriva_ml/core/base.py

82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 137 138 139 140 141 142 143 144 145 146 147 148 149 150 151 152 153 154 155 156 157 158 159 160 161 162 163 164 165 166 167 168 169 170 171 172 173 174 175 176 177 178 179 180 181 182 183 184 185 186 187 188 189 190 191 192 193 194 195 196 197 198 199 200 201 202 203 204 205 206 207 208 209 210 211 212 213 214 215 216 217 218 219 220 221 222 223 224 225 226 227 228 229 230 231 232 233 234 235 236 237 238 239 240 241 242 243 244 245 246 247 248 249 250 251 252 253 254 255 256 257 258 259 260 261 262 263 264 265 266 267 268 269 270 271 272 273 274 275 276 277 278 279 280 281 282 283 284 285 286 287 288 289 290 291 292 293 294 295 296 297 298 299 300 301 302 303 304 305 306 307 308 309 310 311 312 313 314 315 316 317 318 319 320 321 322 323 324 325 326 327 328 329 330 331 332 333 334 335 336 337 338 339 340 341 342 343 344 345 346 347 348 349 350 351 352 353 354 355 356 357 358 359 360 361 362 363 364 365 366 367 368 369 370 371 372 373 374 375 376 377 378 379 380 381 382 383 384 385 386 387 388 389 390 391 392 393 394 395 396 397 398 399 400 401 402 403 404 405 406 407 408 409 410 411 412 413 414 415 416 417 418 419 420 421 422 423 424 425 426 427 428 429 430 431 432 433 434 435 436 437 438 439 440 441 442 443 444 445 446 447 448 449 450 451 452 453 454 455 456 457 458 459 460 461 462 463 464 465 466 467 468 469 470 471 472 473 474 475 476 477 478 479 480 481 482 483 484 485 486 487 488 489 490 491 492 493 494 495 496 497 498 499 500 501 502 503 504 505 506 507 508 509 510 511 512 513 514 515 516 517 518 519 520 521 522 523 524 525 526 527 528 529 530 531 532 533 534 535 536 537 538 539 540 541 542 543 544 545 546 547 548 549 550 551 552 553 554 555 556 557 558 559 560 561 562 563 564 565 566 567 568 569 570 571 572 573 574 575 576 577 578 579 580 581 582 583 584 585 586 587 588 589 590 591 592 593 594 595 596 597 598 599 600 601 602 603 604 605 606 607 608 609 610 611 612 613 614 615 616 617 618 619 620 621 622 623 624 625 626 627 628 629 630 631 632 633 634 635 636 637 638 639 640 641 642 643 644 645 646 647 648 649 650 651 652 653 654 655 656 657 658 659 660 661 662 663 664 665 666 667 668 669 670 671 672 673 674 675 676 677 678 679 680 681 682 683 684 685 686 687 688 689 690 691 692 693 694 695 696 697 698 699 700 701 702 703 704 705 706 707 708 709 710 711 712 713 714 715 716 717 718 719 720 721 722 723 724 725 726 727 728 729 730 731 732 733 734 735 736 737 738 739 740 741 742 743 744 745 746 747 748 749 750 751 752 753 754 755 756 757 758 759 760 761 762 763 764 765 766 767 768 769 770 771 772 773 774 775 776 777 778 779 780 781 782 783 784 785 786 787 788 789 790 791 792 793 794 795 796 797 798 799 800 801 802 803 804 805 806 807 808 809 810 811 812 813 814 815 816 817 818 819 820 821 822 823 824 825 826 827 828 829 830 831 832 833 834 835 836 837 838 839 840 841 842 843 844 845 846 847 848 849 850 851 852 853 854 855 856 857 858 859 860 861 862 863 864 865 866 867 868 869 870 871 872 873 874 875 876 877 878 879 880 881 882 883 884 885 886 887 888 889 890 891 892 893 894 895 896 897 898 899 900 901 902 903 904 905 906 907 908 909 910 911 912 913 914 915 916 917 918 919 920 921 922 923 924 925 926 927 928 929 930 931 932 933 934 935 936 937 938 939 940 941 942 943 944 945 946 947 948 949 950 951 952 953 954 955 956 957 958 959 960 961 962 963 964 965 966 967 968 969 970 971 972 973 974 975 976 977 978 979 980 981 982 983 984 985 986 987 988 989 990 991 992 993 994 995 996 997 998 999 1000 1001 1002 1003 1004 1005 1006 1007 1008 1009 1010 1011 1012 1013 1014 1015 1016 1017 1018 1019 1020 1021 1022 1023 1024 1025 1026 1027 1028 1029 1030 1031 1032 1033 1034 1035 1036 1037 1038 1039 1040 1041 1042 1043 1044 1045 1046 1047 1048 1049 1050 1051 1052 1053 1054 1055 1056 1057 1058 1059 1060 1061 1062 1063 1064 1065 1066 1067 1068 1069 1070 1071 1072 1073 1074 1075 1076 1077 1078 1079 1080 1081 1082 1083 1084 1085 1086 1087 1088 1089 1090 1091 1092 1093 1094 1095 1096 1097 1098 1099 1100 1101 1102 1103 1104 1105 1106 1107 1108 1109 1110 1111 1112 1113 1114 1115 1116 1117 1118 1119 1120 1121 1122 1123 1124 1125 1126 1127 1128 1129 1130 1131 1132 1133 1134 1135 1136 1137 1138 1139 1140 1141 1142 1143 1144 1145 1146 1147 1148 1149 1150 1151 1152 1153 1154 1155 1156 1157 1158 1159 1160 1161 1162 1163 1164 1165 1166 1167 1168 1169 1170 1171 1172 1173 1174 1175 1176 1177 1178 1179 1180 1181 1182 1183 1184 1185 1186 1187 1188 1189 1190 1191 1192 1193 1194 1195 1196 1197 1198 1199 1200 1201 1202 1203 1204 1205 1206 1207 1208 1209 1210 1211 1212 1213 1214 1215 1216 1217 1218 1219 1220 1221 1222 1223 1224 1225 1226 1227 1228 1229 1230 1231 1232 1233 1234 1235 1236 1237 1238 1239 1240 1241 1242 1243 1244 1245 1246 1247 1248 1249 1250 1251 1252 1253 1254 1255 1256 1257 1258 1259 1260 1261 1262 1263 1264 1265 1266 1267 1268 1269 1270 1271 1272 1273 1274 1275 1276 1277 1278 1279 1280 1281 1282 1283 1284 1285 1286 1287 1288 1289 1290 1291 1292 1293 1294 1295 1296 1297 1298 1299 1300 1301 1302 1303 1304 1305 1306 1307 1308 1309 1310 1311 1312 1313 1314 1315 1316 1317 1318 1319 1320 1321 1322 1323 1324 1325 1326 1327 1328 1329 1330 1331 1332 1333 1334 1335 1336 1337 1338 1339 1340 1341 1342 1343 1344 1345 1346 1347 1348 1349 1350 1351 1352 1353 1354 1355 1356 1357 1358 1359 1360 1361 1362 1363 1364 1365 1366 1367 1368 1369 1370 1371 1372 1373 1374 1375 1376 1377 1378 1379 1380 1381 1382 1383 1384 1385 1386 1387 1388 1389 1390 1391 1392 1393 1394 1395 1396 1397 1398 1399 1400 1401 1402 1403 1404 1405 1406 1407 1408 1409 1410 1411 1412 1413 1414 1415 1416 1417 1418 1419 1420 1421 1422 1423 1424 1425 1426 1427 1428 1429 1430 1431 1432 1433 1434 1435 1436 1437 1438 1439 1440 1441 1442 1443 1444 1445 1446 1447 1448 1449 1450 1451 1452 1453 1454 1455 1456 1457 1458 1459 1460 1461 1462 1463 1464 1465 1466 1467 1468 1469 1470 1471 1472 1473 1474 1475 1476 1477 1478 1479 1480 1481 1482 1483 1484 1485 1486 1487 1488 1489 1490 1491 1492 1493 1494 1495 1496 1497 1498 1499 1500 1501 1502 1503 1504 1505 1506 1507 1508 1509 1510 1511 1512 1513 1514 1515 1516 1517 1518 1519 1520 1521 1522 1523 1524 1525 1526 1527 1528 1529 1530 1531 1532 1533 1534 1535 1536 1537 1538 1539 1540 1541 1542 1543 1544 1545 1546 1547 1548 1549 1550 1551 1552 1553 1554 1555 1556 1557 1558 1559 1560 1561 1562 1563 1564 1565 1566 1567 1568 1569 1570 1571 1572 1573 1574 1575 1576 1577 1578 1579 1580 1581 1582 1583 1584 1585 1586 1587 1588 1589 1590 1591 1592 1593 1594 1595 1596 1597 1598 1599 1600 1601 1602 1603 1604 1605 1606 1607 1608 1609 1610 1611 1612 1613 1614 1615 1616 1617 1618 1619 1620 1621 1622 1623 1624 1625 1626 1627 1628 1629 1630 1631 1632 1633 1634 1635 1636 1637 1638 1639 1640 1641 1642 1643 1644 1645 1646 1647 1648 1649 1650 1651 1652 1653 1654 1655 1656 1657 1658 1659 1660 1661 1662 1663 1664 1665 1666 1667 1668 1669 1670 1671 1672 1673 1674 1675 | |

catalog_provenance

property

catalog_provenance: (

"CatalogProvenance | None"

)

Get the provenance information for this catalog.

Returns provenance information if the catalog has it set. This includes information about how the catalog was created (clone, create, schema), who created it, when, and any workflow information.

For cloned catalogs, additional details about the clone operation are

available in the clone_details attribute.

Returns:

| Type | Description |

|---|---|

'CatalogProvenance | None'

|

CatalogProvenance if available, None otherwise. |

Example

ml = DerivaML('localhost', '45') prov = ml.catalog_provenance if prov: ... print(f"Created: {prov.created_at} by {prov.created_by}") ... print(f"Method: {prov.creation_method.value}") ... if prov.is_clone: ... print(f"Cloned from: {prov.clone_details.source_hostname}")

mode

property

mode: ConnectionMode

Current connection mode.

Returns:

| Type | Description |

|---|---|

ConnectionMode

|

The ConnectionMode this DerivaML instance was constructed |

ConnectionMode

|

with. Drives whether writes go live to the catalog (online) |

ConnectionMode

|

or stage in SQLite for later upload (offline). See spec §2.1. |

Example

ml.mode is ConnectionMode.online True

workspace

property

workspace: 'Workspace'

Per-catalog Workspace for local caching, denormalization, and asset manifests.

Backed by Workspace under {working_dir}/catalogs/{host}__{cat}/

working.db. Shared across invocations of scripts that use the same

working directory.

Example::

# Cache a full table

df = ml.cache_table("Subject")

# Check what's cached

ml.workspace.list_cached_results()

__del__

__del__() -> None

Cleanup method to handle incomplete executions.

Best-effort abort on DerivaML shutdown — only for executions that

died mid-flight (i.e., still in Created or Running). Any

post-Running status (Stopped, Failed, Pending_Upload,

Uploaded, Aborted) is treated as terminal here: the user

has either committed cleanly via the context manager or

explicitly transitioned the execution, and a forced abort would

either be a no-op or a wrongful state change.

Forcing a transition during __del__ is also unsafe at object-

teardown time: Python's GC ordering means the underlying

ErmrestCatalog HTTP session may already be finalized, in

which case the catalog PUT would crash with 'NoneType' object

has no attribute 'get' (the catalog's _session reads as

None). Limiting the abort to non-terminal states avoids the

common case where __exit__ already moved the execution to

Stopped and __del__ would otherwise re-transition to

Aborted against a dead session.

Source code in src/deriva_ml/core/base.py

381 382 383 384 385 386 387 388 389 390 391 392 393 394 395 396 397 398 399 400 401 402 403 404 405 406 407 408 409 410 411 412 | |

__init__

__init__(

hostname: str,

catalog_id: str | int,

domain_schemas: str

| set[str]

| None = None,

default_schema: str | None = None,

project_name: str | None = None,

cache_dir: str | Path | None = None,

working_dir: str

| Path

| None = None,

hydra_runtime_output_dir: str

| Path

| None = None,

ml_schema: str = ML_SCHEMA,

logging_level: int = logging.WARNING,

deriva_logging_level: int = logging.WARNING,

credential: dict | None = None,

s3_bucket: str | None = None,

use_minid: bool | None = None,

check_auth: bool = True,

clean_execution_dir: bool = True,

mode: ConnectionMode

| str = ConnectionMode.online,

) -> None

Initializes a DerivaML instance.

This method will connect to a catalog and initialize local configuration for the ML execution. This class is intended to be used as a base class on which domain-specific interfaces are built.

Parameters:

| Name | Type | Description | Default |

|---|---|---|---|

hostname

|

str

|

Hostname of the Deriva server. |

required |

catalog_id

|

str | int

|

Catalog ID. Either an identifier or a catalog name. |

required |

domain_schemas

|

str | set[str] | None

|

Optional set of domain schema names. If None, auto-detects all non-system schemas. Use this when working with catalogs that have multiple user-defined schemas. |

None

|

default_schema

|

str | None

|

The default schema for table creation operations. If None and there is exactly one domain schema, that schema is used. If there are multiple domain schemas, this must be specified for table creation to work without explicit schema parameters. |

None

|

ml_schema

|

str

|

Schema name for ML schema. Used if you have a non-standard configuration of deriva-ml. |

ML_SCHEMA

|

project_name

|

str | None

|

Project name. Defaults to name of default_schema. |

None

|

cache_dir

|

str | Path | None

|

Directory path for caching data downloaded from the Deriva server as bdbag. If not provided, will default to working_dir. |

None

|

working_dir

|

str | Path | None

|

Directory path for storing data used by or generated by any computations. If no value is provided, will default to ${HOME}/deriva_ml |

None

|

s3_bucket

|

str | None

|

S3 bucket URL for dataset bag storage (e.g., 's3://my-bucket'). If provided, enables MINID creation and S3 upload for dataset exports. If None, MINID functionality is disabled regardless of use_minid setting. |

None

|

use_minid

|

bool | None

|

Use the MINID service when downloading dataset bags. Only effective when s3_bucket is configured. If None (default), automatically set to True when s3_bucket is provided, False otherwise. |

None

|

check_auth

|

bool

|

Check if the user has access to the catalog. |

True

|

clean_execution_dir

|

bool

|

Whether to automatically clean up execution working directories after successful upload. Defaults to True. Set to False to retain local copies. |

True

|

mode

|

ConnectionMode | str

|

Connection mode for this instance. |

online

|

Source code in src/deriva_ml/core/base.py

218 219 220 221 222 223 224 225 226 227 228 229 230 231 232 233 234 235 236 237 238 239 240 241 242 243 244 245 246 247 248 249 250 251 252 253 254 255 256 257 258 259 260 261 262 263 264 265 266 267 268 269 270 271 272 273 274 275 276 277 278 279 280 281 282 283 284 285 286 287 288 289 290 291 292 293 294 295 296 297 298 299 300 301 302 303 304 305 306 307 308 309 310 311 312 313 314 315 316 317 318 319 320 321 322 323 324 325 326 327 328 329 330 331 332 333 334 335 336 337 338 339 340 341 342 343 344 345 346 347 348 349 350 351 352 353 354 355 356 357 358 359 360 361 362 363 364 365 366 367 368 369 370 371 372 373 374 375 376 377 378 379 | |

add_dataset_element_type

add_dataset_element_type(

element: str | Table,

) -> Table

Make it possible to add objects from element table to a dataset.

Creates a new association table linking Dataset to the given table, then updates catalog annotations so the new type is included in bag-export specs. If the workspace ORM was already built, it is rebuilt to pick up the new association table — the ORM is eagerly constructed at init time and does not see DDL changes applied after that point.

Parameters:

| Name | Type | Description | Default |

|---|---|---|---|

element

|

str | Table

|

Name of the table (str) or Table object to register as a valid dataset element type. |

required |

Returns:

| Type | Description |

|---|---|

Table

|

The Table object that was registered. |

Raises:

| Type | Description |

|---|---|

DerivaMLException

|

If |

DerivaMLTableTypeError

|

If the table is a system or ML table and cannot be a dataset element type. |

Example

ml.add_dataset_element_type("Image") # doctest: +SKIP

Source code in src/deriva_ml/core/mixins/dataset.py

222 223 224 225 226 227 228 229 230 231 232 233 234 235 236 237 238 239 240 241 242 243 244 245 246 247 248 249 250 251 252 253 254 255 256 257 258 259 260 261 262 263 264 265 266 267 268 269 270 271 272 273 274 275 276 277 278 279 280 281 282 283 284 285 286 287 288 289 | |

add_features

add_features(*args, **kwargs) -> int

Retired — use exe.add_features(records) inside an execution context.

DerivaML.add_features has been removed. Feature writes must go

through the execution context so that provenance is tracked and values

are staged for atomic upload.

Replacement::

with ml.create_execution(config).execute() as exe:

exe.add_features(records)

Raises:

| Type | Description |

|---|---|

DerivaMLException

|

Always. Points at the replacement API. |

Source code in src/deriva_ml/core/mixins/feature.py

347 348 349 350 351 352 353 354 355 356 357 358 359 360 361 362 363 364 365 366 | |

add_files

add_files(

files: Iterable[FileSpec],

execution_rid: RID,

dataset_types: str

| list[str]

| None = None,

description: str = "",

) -> "Dataset"

Adds files to the catalog with their metadata.

Registers files in the catalog along with their metadata (MD5, length, URL) and associates them with specified file types. Links files to the specified execution record for provenance tracking.

Parameters:

| Name | Type | Description | Default |

|---|---|---|---|

files

|

Iterable[FileSpec]

|

File specifications containing MD5 checksum, length, and URL. |

required |

execution_rid

|

RID

|

Execution RID to associate files with (required for provenance). |

required |

dataset_types

|

str | list[str] | None

|

One or more dataset type terms from File_Type vocabulary. |

None

|

description

|

str

|

Description of the files. |

''

|

Returns:

| Name | Type | Description |

|---|---|---|

Dataset |

'Dataset'

|

Dataset that represents the newly added files. |

Raises:

| Type | Description |

|---|---|

DerivaMLException

|

If file_types are invalid or execution_rid is not an execution record. |

Examples:

Add files via an execution: >>> with ml.create_execution(config) as exe: # doctest: +SKIP ... files = [FileSpec(url="path/to/file.txt", md5="abc123", length=1000)] ... dataset = exe.add_files(files, dataset_types="text")

Source code in src/deriva_ml/core/mixins/file.py

60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 137 138 139 140 141 142 143 144 145 146 147 148 149 150 151 152 153 154 155 156 157 158 159 160 161 162 163 164 165 166 167 168 169 170 171 172 173 | |

add_term

add_term(

table: str | Table,

term_name: str,

description: str,

synonyms: list[str] | None = None,

exists_ok: bool = True,

) -> VocabularyTermHandle

Adds a term to a vocabulary table.

Creates a new standardized term with description and optional synonyms in a vocabulary table. Can either create a new term or return an existing one if it already exists.

Parameters:

| Name | Type | Description | Default |

|---|---|---|---|

table

|

str | Table

|

Vocabulary table to add term to (name or Table object). |

required |

term_name

|

str

|

Primary name of the term (must be unique within vocabulary). |

required |

description

|

str

|

Explanation of term's meaning and usage. |

required |

synonyms

|

list[str] | None

|

Alternative names for the term. |

None

|

exists_ok

|

bool

|

If True, return the existing term if found. If False, raise error. |

True

|

Returns:

| Name | Type | Description |

|---|---|---|

VocabularyTermHandle |

VocabularyTermHandle

|

Object representing the created or existing term, with methods to modify it in the catalog. |

Raises:

| Type | Description |

|---|---|

DerivaMLException

|

If a term exists and exists_ok=False, or if the table is not a vocabulary table. |

Examples:

Add a new tissue type: >>> term = ml.add_term( # doctest: +SKIP ... table="tissue_types", ... term_name="epithelial", ... description="Epithelial tissue type", ... synonyms=["epithelium"] ... ) >>> # Modify the term >>> term.description = "Updated description" >>> term.synonyms = ("epithelium", "epithelial_tissue")

Attempt to add an existing term: >>> term = ml.add_term("tissue_types", "epithelial", "...", exists_ok=True) # doctest: +SKIP

Source code in src/deriva_ml/core/mixins/vocabulary.py

105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 137 138 139 140 141 142 143 144 145 146 147 148 149 150 151 152 153 154 155 156 157 158 159 160 161 162 163 164 165 166 167 168 169 170 171 172 173 174 175 176 177 178 179 180 181 182 183 184 185 186 187 188 189 190 191 192 193 | |

add_visible_column

add_visible_column(

table: str | Table,

context: str,

column: str

| list[str]

| dict[str, Any],

position: int | None = None,

) -> list[Any]

Add a column to the visible-columns list for a specific context.

Convenience method for adding columns without replacing the entire

visible-columns annotation. Changes are staged until

apply_annotations() is called.

Parameters:

| Name | Type | Description | Default |

|---|---|---|---|

table

|

str | Table

|

Table name (str) or |

required |

context

|

str

|

The context to modify (e.g., |

required |

column

|

str | list[str] | dict[str, Any]

|

Column to add. Can be:

- str: column name (e.g., |

required |

position

|

int | None

|

Position to insert at (0-indexed). If |

None

|

Returns:

| Type | Description |

|---|---|

list[Any]

|

The updated column list for the context. |

Raises:

| Type | Description |

|---|---|

DerivaMLTableTypeError

|

If |

DerivaMLException

|

If |

Example

ml.add_visible_column("Image", "compact", "Description") # doctest: +SKIP ml.add_visible_column("Image", "detailed", ["domain", "Image_Subject_fkey"], 1) # doctest: +SKIP ml.apply_annotations() # doctest: +SKIP

Source code in src/deriva_ml/core/mixins/annotation.py

454 455 456 457 458 459 460 461 462 463 464 465 466 467 468 469 470 471 472 473 474 475 476 477 478 479 480 481 482 483 484 485 486 487 488 489 490 491 492 493 494 495 496 497 498 499 500 501 502 503 504 505 506 507 508 509 510 511 512 513 514 515 516 517 518 519 520 | |

add_visible_foreign_key

add_visible_foreign_key(

table: str | Table,

context: str,

foreign_key: list[str]

| dict[str, Any],

position: int | None = None,

) -> list[Any]

Add a foreign key to the visible-foreign-keys list for a specific context.

Convenience method for adding related tables without replacing the

entire visible-foreign-keys annotation. Changes are staged until

apply_annotations() is called.

Parameters:

| Name | Type | Description | Default |

|---|---|---|---|

table

|

str | Table

|

Table name (str) or |

required |

context

|

str

|

The context to modify (e.g., |

required |

foreign_key

|

list[str] | dict[str, Any]

|

Foreign key to add. Can be:

- list: inbound FK reference (e.g.,

|

required |

position

|

int | None

|

Position to insert at (0-indexed). If |

None

|

Returns:

| Type | Description |

|---|---|

list[Any]

|

The updated foreign key list for the context. |

Raises:

| Type | Description |

|---|---|

DerivaMLTableTypeError

|

If |

DerivaMLException

|

If |

Example

ml.add_visible_foreign_key("Subject", "detailed", ["domain", "Image_Subject_fkey"]) # doctest: +SKIP ml.apply_annotations() # doctest: +SKIP

Source code in src/deriva_ml/core/mixins/annotation.py

684 685 686 687 688 689 690 691 692 693 694 695 696 697 698 699 700 701 702 703 704 705 706 707 708 709 710 711 712 713 714 715 716 717 718 719 720 721 722 723 724 725 726 727 728 729 730 731 732 733 734 735 736 737 738 739 740 741 742 743 744 745 746 747 748 | |

apply_annotations

apply_annotations() -> None

Apply all staged annotation changes to the catalog.

Pushes any in-memory annotation changes to the live catalog. Must

be called after any sequence of set_* or add_*/remove_*

annotation calls to make changes visible in Chaise.

Raises:

| Type | Description |

|---|---|

DerivaMLException

|

If the catalog is read-only or the apply call fails. |

Example

ml.set_display_annotation("Image", {"name": "Scan"}) # doctest: +SKIP ml.apply_annotations() # doctest: +SKIP

Source code in src/deriva_ml/core/mixins/annotation.py

433 434 435 436 437 438 439 440 441 442 443 444 445 446 447 448 | |

apply_catalog_annotations

apply_catalog_annotations(

navbar_brand_text: str = "ML Data Browser",

head_title: str = "Catalog ML",

) -> None

Apply catalog-level annotations including the navigation bar and display settings.

This method configures the Chaise web interface for the catalog. Chaise is Deriva's web-based data browser that provides a user-friendly interface for exploring and managing catalog data. This method sets up annotations that control how Chaise displays and organizes the catalog.

Navigation Bar Structure: The method creates a navigation bar with the following menus: - User Info: Links to Users, Groups, and RID Lease tables - Deriva-ML: Core ML tables (Workflow, Execution, Dataset, Dataset_Version, etc.) - WWW: Web content tables (Page, File) - {Domain Schema}: All domain-specific tables (excludes vocabularies and associations) - Vocabulary: All controlled vocabulary tables from both ML and domain schemas - Assets: All asset tables from both ML and domain schemas - Features: All feature tables with entries named "TableName:FeatureName" - Catalog Registry: Link to the ermrest registry - Documentation: Links to ML notebook instructions and Deriva-ML docs

Display Settings: - Underscores in table/column names displayed as spaces - System columns (RID) shown in compact and entry views - Default table set to Dataset - Faceting and record deletion enabled - Export configurations available to all users

Bulk Upload Configuration: Configures upload patterns for asset tables, enabling drag-and-drop file uploads through the Chaise interface.

Call this after creating the domain schema and all tables to initialize the catalog's web interface. The navigation menus are dynamically built based on the current schema structure, automatically organizing tables into appropriate categories.

Parameters:

| Name | Type | Description | Default |

|---|---|---|---|

navbar_brand_text

|

str

|

Text displayed in the navigation bar brand area. |

'ML Data Browser'

|

head_title

|

str

|

Title displayed in the browser tab. |

'Catalog ML'

|

Example

ml = DerivaML('deriva.example.org', 'my_catalog')

After creating domain schema and tables...

ml.apply_catalog_annotations()

Or with custom branding:

ml.apply_catalog_annotations("My Project Browser", "My ML Project")

Source code in src/deriva_ml/core/base.py

1061 1062 1063 1064 1065 1066 1067 1068 1069 1070 1071 1072 1073 1074 1075 1076 1077 1078 1079 1080 1081 1082 1083 1084 1085 1086 1087 1088 1089 1090 1091 1092 1093 1094 1095 1096 1097 1098 1099 1100 1101 1102 1103 1104 1105 1106 1107 1108 1109 1110 1111 1112 1113 1114 1115 1116 1117 1118 1119 | |

asset_record_class

asset_record_class(

asset_table_name: str,

) -> type

Create a dynamically generated Pydantic model for an asset table's metadata.

The returned class is a subclass of AssetRecord with fields derived from the asset table's metadata columns (non-system, non-standard-asset columns). Fields are typed according to their database column type, and nullable columns are Optional.

Follows the same pattern as Feature.feature_record_class().

Parameters:

| Name | Type | Description | Default |

|---|---|---|---|

asset_table_name

|

str

|

Name of the asset table (e.g., "Image", "Model"). |

required |

Returns:

| Type | Description |

|---|---|

type

|

An AssetRecord subclass with validated fields matching the table's metadata. |

Example

ImageAsset = ml.asset_record_class("Image") # doctest: +SKIP record = ImageAsset(Subject="2-DEF", Acquisition_Date="2026-01-15") # doctest: +SKIP path = exe.asset_file_path("Image", "scan.jpg", metadata=record) # doctest: +SKIP

Source code in src/deriva_ml/core/mixins/asset.py

424 425 426 427 428 429 430 431 432 433 434 435 436 437 438 439 440 441 442 443 444 445 446 447 | |

bag_info

bag_info(

dataset: "DatasetSpec",

) -> dict[str, Any]

Get comprehensive info about a dataset bag: size, contents, and cache status.

Combines the size estimate with local cache status. Use this to decide whether to prefetch a bag before running an experiment.

Parameters:

| Name | Type | Description | Default |

|---|---|---|---|

dataset

|

'DatasetSpec'

|

Specification of the dataset, including version and optional exclude_tables. |

required |

Returns:

| Type | Description |

|---|---|

dict[str, Any]

|

dict with keys: - tables: dict mapping table name to {row_count, is_asset, asset_bytes} - total_rows, total_asset_bytes, total_asset_size - cache_status: one of "not_cached", "cached_metadata_only", "cached_materialized", "cached_holey" - cache_path: local path to cached bag (if cached), else None |

Source code in src/deriva_ml/core/mixins/dataset.py

361 362 363 364 365 366 367 368 369 370 371 372 373 374 375 376 377 378 379 380 381 382 383 384 385 386 387 388 | |

cache_dataset

cache_dataset(

dataset: "DatasetSpec",

materialize: bool = True,

) -> dict[str, Any]

Download a dataset bag into the local cache without creating an execution.

Use this to warm the cache before running experiments. No execution or provenance records are created.

Parameters:

| Name | Type | Description | Default |

|---|---|---|---|

dataset

|

'DatasetSpec'

|

Specification of the dataset, including version and optional exclude_tables. |

required |

materialize

|

bool

|

If True (default), download all asset files. If False, download only table metadata. |

True

|

Returns:

| Type | Description |

|---|---|

dict[str, Any]

|

dict with bag_info results after caching. |

Source code in src/deriva_ml/core/mixins/dataset.py

506 507 508 509 510 511 512 513 514 515 516 517 518 519 520 521 522 523 524 525 526 527 528 529 530 531 532 533 534 | |

cache_table

cache_table(

table_name: str, force: bool = False

) -> "pd.DataFrame"

Fetch a table from the catalog and cache locally as SQLite.

On first call, fetches all rows from the catalog and stores in the

working data cache. Subsequent calls return the cached data without

contacting the catalog. Use force=True to re-fetch.

Parameters:

| Name | Type | Description | Default |

|---|---|---|---|

table_name

|

str

|

Name of the table to fetch (e.g., "Subject", "Image"). |

required |

force

|

bool

|

If True, re-fetch even if already cached. |

False

|

Returns:

| Type | Description |

|---|---|

'pd.DataFrame'

|

DataFrame with the table contents. |

Example::

subjects = ml.cache_table("Subject")

print(f"{len(subjects)} subjects")

# Second call returns cached data instantly

subjects = ml.cache_table("Subject")

Source code in src/deriva_ml/core/base.py

861 862 863 864 865 866 867 868 869 870 871 872 873 874 875 876 877 878 879 880 881 882 883 884 885 886 887 888 | |

catalog_snapshot

catalog_snapshot(

version_snapshot: str,

) -> Self

Return a new DerivaML instance connected to a specific catalog snapshot.

Catalog snapshots provide a read-only, point-in-time view of the catalog. The snapshot identifier is typically obtained from a dataset version record.

Parameters:

| Name | Type | Description | Default |

|---|---|---|---|

version_snapshot

|

str

|

Snapshot identifier string (e.g., |

required |

Returns:

| Type | Description |

|---|---|

Self

|

A new DerivaML instance connected to the specified catalog snapshot. |

Source code in src/deriva_ml/core/base.py

743 744 745 746 747 748 749 750 751 752 753 754 755 756 757 758 759 760 761 | |

chaise_url

chaise_url(

table: RID | Table | str,

) -> str

Generates Chaise web interface URL.

Chaise is Deriva's web interface for data exploration. This method creates a URL that directly links to the specified table or record.

Parameters:

| Name | Type | Description | Default |

|---|---|---|---|

table

|

RID | Table | str

|

Table to generate URL for (name, Table object, or RID). |

required |

Returns:

| Name | Type | Description |

|---|---|---|

str |

str

|

URL in format: https://{host}/chaise/recordset/#{catalog}/{schema}:{table} |

Raises:

| Type | Description |

|---|---|

DerivaMLException

|

If table or RID cannot be found. |

Examples:

Using table name: >>> ml.chaise_url("experiment_table") 'https://deriva.org/chaise/recordset/#1/schema:experiment_table'

Using RID: >>> ml.chaise_url("1-abc123")

Source code in src/deriva_ml/core/base.py

950 951 952 953 954 955 956 957 958 959 960 961 962 963 964 965 966 967 968 969 970 971 972 973 974 975 976 977 978 979 980 | |

cite

cite(

entity: Dict[str, Any] | str,

current: bool = False,

) -> str

Generates citation URL for an entity.

Creates a URL that can be used to reference a specific entity in the catalog. By default, includes the catalog snapshot time to ensure version stability (permanent citation). With current=True, returns a URL to the current state.

Parameters:

| Name | Type | Description | Default |

|---|---|---|---|

entity

|

Dict[str, Any] | str

|

Either a RID string or a dictionary containing entity data with a 'RID' key. |

required |

current

|

bool

|

If True, return URL to current catalog state (no snapshot). If False (default), return permanent citation URL with snapshot time. |

False

|

Returns:

| Name | Type | Description |

|---|---|---|

str |

str

|

Citation URL. Format depends on |

Raises:

| Type | Description |

|---|---|

DerivaMLException

|

If an entity doesn't exist or lacks a RID. |

Examples:

Permanent citation (default): >>> url = ml.cite("1-abc123") >>> print(url) 'https://deriva.org/id/1/1-abc123@2024-01-01T12:00:00'

Current catalog URL: >>> url = ml.cite("1-abc123", current=True) >>> print(url) 'https://deriva.org/id/1/1-abc123'

Using a dictionary: >>> url = ml.cite({"RID": "1-abc123"})

Source code in src/deriva_ml/core/base.py

982 983 984 985 986 987 988 989 990 991 992 993 994 995 996 997 998 999 1000 1001 1002 1003 1004 1005 1006 1007 1008 1009 1010 1011 1012 1013 1014 1015 1016 1017 1018 1019 1020 1021 1022 1023 1024 1025 1026 1027 1028 1029 1030 | |

clean_execution_dirs

clean_execution_dirs(

older_than_days: int | None = None,

exclude_rids: list[str]

| None = None,

) -> dict[str, int]

Clean up execution working directories.

Removes execution output directories from the local working directory. Use this to free up disk space from completed or orphaned executions.

Parameters:

| Name | Type | Description | Default |

|---|---|---|---|

older_than_days

|

int | None

|

If provided, only remove directories older than this many days. If None, removes all execution directories (except excluded). |

None

|

exclude_rids

|

list[str] | None

|

List of execution RIDs to preserve (never remove). |

None

|

Returns:

| Type | Description |

|---|---|

dict[str, int]

|

dict with keys: - 'dirs_removed': Number of directories removed - 'bytes_freed': Total bytes freed - 'errors': Number of removal errors |

Example

ml = DerivaML('deriva.example.org', 'my_catalog')

Clean all execution dirs older than 30 days

result = ml.clean_execution_dirs(older_than_days=30) print(f"Freed {result['bytes_freed'] / 1e9:.2f} GB")

Clean all except specific executions

result = ml.clean_execution_dirs(exclude_rids=['1-ABC', '1-DEF'])

Source code in src/deriva_ml/core/base.py

1503 1504 1505 1506 1507 1508 1509 1510 1511 1512 1513 1514 1515 1516 1517 1518 1519 1520 1521 1522 1523 1524 1525 1526 1527 1528 1529 1530 1531 1532 1533 1534 1535 1536 1537 1538 1539 1540 1541 1542 1543 1544 1545 1546 1547 1548 1549 1550 1551 1552 1553 1554 1555 1556 1557 1558 1559 1560 1561 1562 1563 1564 1565 1566 1567 1568 1569 1570 1571 1572 1573 1574 | |

clear_cache

clear_cache(

older_than_days: int | None = None,

) -> dict[str, int]

Clear the dataset cache directory.

Removes cached dataset bags from the cache directory. Can optionally filter by age to only remove old cache entries.

Parameters:

| Name | Type | Description | Default |

|---|---|---|---|

older_than_days

|

int | None

|

If provided, only remove cache entries older than this many days. If None, removes all cache entries. |

None

|

Returns:

| Type | Description |

|---|---|

dict[str, int]

|

dict with keys: - 'files_removed': Number of files removed - 'dirs_removed': Number of directories removed - 'bytes_freed': Total bytes freed - 'errors': Number of removal errors |

Example

ml = DerivaML('deriva.example.org', 'my_catalog')

Clear all cache

result = ml.clear_cache() print(f"Freed {result['bytes_freed'] / 1e6:.1f} MB")

Clear cache older than 7 days

result = ml.clear_cache(older_than_days=7)

Source code in src/deriva_ml/core/base.py

1355 1356 1357 1358 1359 1360 1361 1362 1363 1364 1365 1366 1367 1368 1369 1370 1371 1372 1373 1374 1375 1376 1377 1378 1379 1380 1381 1382 1383 1384 1385 1386 1387 1388 1389 1390 1391 1392 1393 1394 1395 1396 1397 1398 1399 1400 1401 1402 1403 1404 1405 1406 1407 1408 1409 1410 1411 1412 1413 1414 1415 1416 1417 1418 1419 1420 1421 | |

clear_vocabulary_cache

clear_vocabulary_cache(

table: str | Table | None = None,

) -> None

Clear the vocabulary term cache.

Parameters:

| Name | Type | Description | Default |

|---|---|---|---|

table

|

str | Table | None

|

If provided, only clear cache for this specific vocabulary table. If None, clear the entire cache. |

None

|

Source code in src/deriva_ml/core/mixins/vocabulary.py

65 66 67 68 69 70 71 72 73 74 75 76 77 78 | |

create_asset

create_asset(

asset_name: str,

column_defs: Iterable[

ColumnDefinition

]

| None = None,

fkey_defs: Iterable[

ColumnDefinition

]

| None = None,

referenced_tables: Iterable[Table]

| None = None,

comment: str = "",

schema: str | None = None,

update_navbar: bool = True,

) -> Table

Create a new asset table in the catalog.

Defines a Chaise-compatible asset table (Filename, URL, Length, MD5,

Description, plus system columns) with optional additional metadata

columns and foreign-key references. Registers the asset type in the

Asset_Type vocabulary and optionally updates the Chaise navigation

bar.

Parameters:

| Name | Type | Description | Default |

|---|---|---|---|

asset_name

|

str

|

Name for the new asset table, e.g. |

required |

column_defs

|

Iterable[ColumnDefinition] | None

|

Extra metadata columns beyond the standard asset

columns. Each is a |

None

|

fkey_defs

|

Iterable[ColumnDefinition] | None

|

Foreign-key definitions from the asset table to other tables (e.g., linking images to a subject table). |

None

|

referenced_tables

|

Iterable[Table] | None

|

Tables that the new asset table should reference

via FKs. Convenience alternative to |

None

|

comment

|

str

|

Human-readable description of the asset table stored as the table comment in the catalog. |

''

|

schema

|

str | None

|

Schema in which to create the table. Defaults to

|

None

|

update_navbar

|

bool

|

If |

True

|

Returns:

| Type | Description |

|---|---|

Table

|

The newly created |

Raises:

| Type | Description |

|---|---|

DerivaMLException

|

If a table named |

DerivaMLSchemaError

|

If |

Example

from deriva.core.typed import Column, builtin_types # doctest: +SKIP ml.create_asset( # doctest: +SKIP ... "ScanImage", # doctest: +SKIP ... comment="MRI scan images", # doctest: +SKIP ... ) # doctest: +SKIP

Source code in src/deriva_ml/core/mixins/asset.py

65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 137 138 139 140 141 142 143 144 145 146 147 148 149 150 151 152 153 154 155 156 157 158 159 160 161 162 163 164 165 166 167 168 169 170 171 172 173 174 175 176 177 178 | |

create_execution

create_execution(

configuration: "ExecutionConfiguration | None" = None,

*,

datasets: "list[DatasetSpec | str] | None" = None,

assets: "list[AssetSpec | str] | None" = None,

workflow: "Workflow | RID | str | None" = None,

description: "str | None" = None,

dry_run: bool = False,

) -> "Execution"

Create an execution environment.

Initializes a local compute environment for executing an ML or

analytic routine. Accepts either a pre-built

:class:ExecutionConfiguration (the config-object form) or

individual keyword arguments that the method assembles into an

ExecutionConfiguration (the kwargs form). Mixing the two

forms is rejected with TypeError — pick one.

Creating executions requires online mode because the Execution RID is server-assigned.

Side effects:

- Downloads datasets specified in the configuration to the cache directory. If no version is specified, creates a new minor version for the dataset.

- Downloads any execution assets to the working directory.

- Creates an execution record in the catalog (unless

dry_run=True).

Parameters:

| Name | Type | Description | Default |

|---|---|---|---|

configuration

|

'ExecutionConfiguration | None'

|

A pre-built ExecutionConfiguration. If this

is provided, all of the kwargs below (except

|

None

|

datasets

|

'list[DatasetSpec | str] | None'

|

Kwargs form only. List of :class: |

None

|

assets

|

'list[AssetSpec | str] | None'

|

Kwargs form only. List of :class: |

None

|

workflow

|

'Workflow | RID | str | None'

|

A :class: |

None

|

description

|

'str | None'

|

Kwargs form only. Human-readable description of the execution. |

None

|

dry_run

|

bool

|

If True, skip creating catalog records and uploading results. |

False

|

Returns:

| Name | Type | Description |

|---|---|---|

An |

'Execution'

|

class: |

'Execution'

|

lifecycle. |

Raises:

| Type | Description |

|---|---|

TypeError

|

If |

DerivaMLOfflineError

|

If the current connection mode is

:attr: |

Example

Config-object form::

>>> config = ExecutionConfiguration( # doctest: +SKIP

... workflow=workflow,

... description="Process samples",

... datasets=[DatasetSpec(rid="4HM", version="1.0.0")],

... )

>>> with ml.create_execution(config) as execution:

... # Run analysis

... pass

>>> execution.upload_execution_outputs()

Kwargs form (equivalent)::

>>> with ml.create_execution( # doctest: +SKIP

... datasets=["4HM@1.0.0"],

... workflow=workflow,

... description="Process samples",

... ) as execution:

... # Run analysis

... pass

Source code in src/deriva_ml/core/mixins/execution.py

73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 137 138 139 140 141 142 143 144 145 146 147 148 149 150 151 152 153 154 155 156 157 158 159 160 161 162 163 164 165 166 167 168 169 170 171 172 173 174 175 176 177 178 179 180 181 182 183 184 185 186 187 188 189 190 191 192 193 194 195 196 197 198 199 200 201 202 203 204 205 206 207 208 209 210 211 212 213 214 215 216 217 218 219 220 221 222 223 224 225 226 227 228 229 230 | |

create_feature

create_feature(

target_table: Table | str,

feature_name: str,

terms: list[Table | str]

| None = None,

assets: list[Table | str]

| None = None,

metadata: list[

ColumnDefinition

| Table

| Key

| str

]

| None = None,

optional: list[str] | None = None,

comment: str = "",

update_navbar: bool = True,

) -> type[FeatureRecord]

Creates a new feature definition.

A feature represents a measurable property or characteristic that can be associated with records in the target table. Features can include vocabulary terms, asset references, and additional metadata.

Side Effects: This method dynamically creates: 1. A new association table in the domain schema to store feature values 2. A Pydantic model class (subclass of FeatureRecord) for creating validated feature instances

The returned Pydantic model class provides type-safe construction of feature records with automatic validation of values against the feature's definition (vocabulary terms, asset references, etc.). Use this class to create feature instances that can be inserted into the catalog.

Parameters:

| Name | Type | Description | Default |

|---|---|---|---|

target_table

|

Table | str

|

Table to associate the feature with (name or Table object). |

required |

feature_name

|

str

|

Unique name for the feature within the target table. |

required |

terms

|

list[Table | str] | None

|

Optional vocabulary tables/names whose terms can be used as feature values. |

None

|

assets

|

list[Table | str] | None

|

Optional asset tables/names that can be referenced by this feature. |

None

|

metadata

|

list[ColumnDefinition | Table | Key | str] | None

|

Optional columns, tables, or keys to include in a feature definition. |

None

|

optional

|

list[str] | None

|

Column names that are not required when creating feature instances. |

None

|

comment

|

str

|

Description of the feature's purpose and usage. |

''

|

update_navbar

|

bool

|

If True (default), automatically updates the navigation bar to include the new feature table. Set to False during batch feature creation to avoid redundant updates, then call apply_catalog_annotations() once at the end. |

True

|

Returns:

| Type | Description |

|---|---|

type[FeatureRecord]

|

type[FeatureRecord]: A dynamically generated Pydantic model class for creating validated feature instances. The class has fields corresponding to the feature's terms, assets, and metadata columns. |

Raises:

| Type | Description |

|---|---|

DerivaMLException

|

If a feature definition is invalid or conflicts with existing features. |

Examples:

Create a feature with confidence score: >>> DiagnosisFeature = ml.create_feature( # doctest: +SKIP ... target_table="Image", ... feature_name="Diagnosis", ... terms=["Diagnosis_Type"], ... metadata=[ColumnDefinition(name="confidence", type=BuiltinTypes.float4)], ... comment="Clinical diagnosis label" ... ) >>> # Use the returned class to create validated feature instances >>> record = DiagnosisFeature( ... Image="1-ABC", # Target record RID ... Diagnosis_Type="Normal", # Vocabulary term ... confidence=0.95, ... Execution="2-XYZ" # Execution that produced this value ... )

Source code in src/deriva_ml/core/mixins/feature.py

65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 137 138 139 140 141 142 143 144 145 146 147 148 149 150 151 152 153 154 155 156 157 158 159 160 161 162 163 164 165 166 167 168 169 170 171 172 173 174 175 176 177 178 179 180 181 182 183 184 185 186 187 | |

create_table

create_table(

table: TableDefinition,

schema: str | None = None,

update_navbar: bool = True,

) -> Table

Creates a new table in the domain schema.

Creates a table using the provided TableDefinition object, which specifies the table structure including columns, keys, and foreign key relationships. The table is created in the domain schema associated with this DerivaML instance.

Required Classes: Import the following classes from deriva_ml to define tables:

TableDefinition: Defines the complete table structureColumnDefinition: Defines individual columns with types and constraintsKeyDefinition: Defines unique key constraints (optional)ForeignKeyDefinition: Defines foreign key relationships to other tables (optional)BuiltinTypes: Enum of available column data types

Available Column Types (BuiltinTypes enum):

text, int2, int4, int8, float4, float8, boolean,

date, timestamp, timestamptz, json, jsonb, markdown,

ermrest_uri, ermrest_rid, ermrest_rcb, ermrest_rmb,

ermrest_rct, ermrest_rmt

Parameters:

| Name | Type | Description | Default |

|---|---|---|---|

table

|

TableDefinition

|

A TableDefinition object containing the complete specification of the table to create. |

required |

update_navbar

|

bool

|

If True (default), automatically updates the navigation bar to include the new table. Set to False during batch table creation to avoid redundant updates, then call apply_catalog_annotations() once at the end. |

True

|

Returns:

| Name | Type | Description |

|---|---|---|

Table |

Table

|

The newly created ERMRest table object. |

Raises:

| Type | Description |

|---|---|

DerivaMLException

|

If table creation fails or the definition is invalid. |

Examples:

Simple table with basic columns:

>>> from deriva_ml import TableDefinition, ColumnDefinition, BuiltinTypes

>>>

>>> table_def = TableDefinition(

... name="Experiment",

... column_defs=[

... ColumnDefinition(name="Name", type=BuiltinTypes.text, nullok=False),

... ColumnDefinition(name="Date", type=BuiltinTypes.date),

... ColumnDefinition(name="Description", type=BuiltinTypes.markdown),

... ColumnDefinition(name="Score", type=BuiltinTypes.float4),

... ],

... comment="Records of experimental runs"

... )

>>> experiment_table = ml.create_table(table_def)

Table with foreign key to another table:

>>> from deriva_ml import (

... TableDefinition, ColumnDefinition, ForeignKeyDefinition, BuiltinTypes

... )

>>>

>>> # Create a Sample table that references Subject

>>> sample_def = TableDefinition(

... name="Sample",

... column_defs=[

... ColumnDefinition(name="Name", type=BuiltinTypes.text, nullok=False),

... ColumnDefinition(name="Subject", type=BuiltinTypes.text, nullok=False),

... ColumnDefinition(name="Collection_Date", type=BuiltinTypes.date),

... ],

... fkey_defs=[

... ForeignKeyDefinition(

... colnames=["Subject"],

... pk_sname=ml.default_schema, # Schema of referenced table

... pk_tname="Subject", # Name of referenced table

... pk_colnames=["RID"], # Column(s) in referenced table

... on_delete="CASCADE", # Delete samples when subject deleted

... )

... ],

... comment="Biological samples collected from subjects"

... )

>>> sample_table = ml.create_table(sample_def)

Table with unique key constraint:

>>> from deriva_ml import (

... TableDefinition, ColumnDefinition, KeyDefinition, BuiltinTypes

... )

>>>

>>> protocol_def = TableDefinition(

... name="Protocol",

... column_defs=[

... ColumnDefinition(name="Name", type=BuiltinTypes.text, nullok=False),

... ColumnDefinition(name="Version", type=BuiltinTypes.text, nullok=False),

... ColumnDefinition(name="Description", type=BuiltinTypes.markdown),

... ],

... key_defs=[

... KeyDefinition(

... colnames=["Name", "Version"],

... constraint_names=[["myschema", "Protocol_Name_Version_key"]],

... comment="Each protocol name+version must be unique"

... )

... ],

... comment="Experimental protocols with versioning"

... )

>>> protocol_table = ml.create_table(protocol_def)

Batch creation without navbar updates:

>>> ml.create_table(table1_def, update_navbar=False)

>>> ml.create_table(table2_def, update_navbar=False)

>>> ml.create_table(table3_def, update_navbar=False)

>>> ml.apply_catalog_annotations() # Update navbar once at the end

Source code in src/deriva_ml/core/base.py

1179 1180 1181 1182 1183 1184 1185 1186 1187 1188 1189 1190 1191 1192 1193 1194 1195 1196 1197 1198 1199 1200 1201 1202 1203 1204 1205 1206 1207 1208 1209 1210 1211 1212 1213 1214 1215 1216 1217 1218 1219 1220 1221 1222 1223 1224 1225 1226 1227 1228 1229 1230 1231 1232 1233 1234 1235 1236 1237 1238 1239 1240 1241 1242 1243 1244 1245 1246 1247 1248 1249 1250 1251 1252 1253 1254 1255 1256 1257 1258 1259 1260 1261 1262 1263 1264 1265 1266 1267 1268 1269 1270 1271 1272 1273 1274 1275 1276 1277 1278 1279 1280 1281 1282 1283 1284 1285 1286 1287 1288 1289 1290 1291 1292 1293 1294 1295 1296 1297 1298 1299 1300 | |

create_vocabulary

create_vocabulary(

vocab_name: str,

comment: str = "",

schema: str | None = None,

update_navbar: bool = True,

) -> Table

Creates a controlled vocabulary table.

A controlled vocabulary table maintains a list of standardized terms and their definitions. Each term can have synonyms and descriptions to ensure consistent terminology usage across the dataset.

Parameters:

| Name | Type | Description | Default |

|---|---|---|---|

vocab_name

|

str

|

Name for the new vocabulary table. Must be a valid SQL identifier. |

required |

comment

|

str

|

Description of the vocabulary's purpose and usage. Defaults to empty string. |

''

|

schema

|

str | None

|

Schema name to create the table in. If None, uses domain_schema. |

None

|

update_navbar

|

bool

|

If True (default), automatically updates the navigation bar to include the new vocabulary table. Set to False during batch table creation to avoid redundant updates, then call apply_catalog_annotations() once at the end. |

True

|

Returns:

| Name | Type | Description |

|---|---|---|

Table |

Table

|

ERMRest table object representing the newly created vocabulary table. |

Raises:

| Type | Description |

|---|---|

DerivaMLException

|

If vocab_name is invalid or already exists. |

Examples:

Create a vocabulary for tissue types:

>>> table = ml.create_vocabulary(

... vocab_name="tissue_types",

... comment="Standard tissue classifications",

... schema="bio_schema"

... )

Create multiple vocabularies without updating navbar until the end:

>>> ml.create_vocabulary("Species", update_navbar=False)

>>> ml.create_vocabulary("Tissue_Type", update_navbar=False)

>>> ml.apply_catalog_annotations() # Update navbar once

Source code in src/deriva_ml/core/base.py

1121 1122 1123 1124 1125 1126 1127 1128 1129 1130 1131 1132 1133 1134 1135 1136 1137 1138 1139 1140 1141 1142 1143 1144 1145 1146 1147 1148 1149 1150 1151 1152 1153 1154 1155 1156 1157 1158 1159 1160 1161 1162 1163 1164 1165 1166 1167 1168 1169 1170 1171 1172 1173 1174 1175 1176 1177 | |

create_workflow

create_workflow(

name: str,

workflow_type: str | list[str],

description: str = "",

) -> Workflow

Creates a new workflow definition.

Creates a Workflow object that represents a computational process or analysis pipeline. The workflow type(s) must be terms from the controlled vocabulary. This method is typically used to define new analysis workflows before execution.

Parameters:

| Name | Type | Description | Default |

|---|---|---|---|

name

|

str

|

Name of the workflow. |

required |

workflow_type

|

str | list[str]

|

Type(s) of workflow (must exist in workflow_type vocabulary). Can be a single string or a list of strings. |

required |

description

|

str

|

Description of what the workflow does. |

''

|

Returns:

| Name | Type | Description |

|---|---|---|

Workflow |

Workflow

|

New workflow object ready for registration. |

Raises:

| Type | Description |

|---|---|

DerivaMLException

|

If any workflow_type is not in the vocabulary. |

Examples:

>>> workflow = ml.create_workflow(

... name="RNA Analysis",

... workflow_type="python_notebook",

... description="RNA sequence analysis pipeline"

... )

>>> rid = ml._add_workflow(workflow)

Multiple types::

>>> workflow = ml.create_workflow(

... name="Training Pipeline",

... workflow_type=["Training", "Embedding"],

... description="Combined training and embedding pipeline"

... )

Source code in src/deriva_ml/core/mixins/workflow.py

361 362 363 364 365 366 367 368 369 370 371 372 373 374 375 376 377 378 379 380 381 382 383 384 385 386 387 388 389 390 391 392 393 394 395 396 397 398 399 400 401 402 | |

define_association

define_association(

associates: list,

metadata: list | None = None,

table_name: str | None = None,

comment: str | None = None,

**kwargs,

) -> dict

Build an association table definition with vocab-aware key selection.

Creates a table definition that links two or more tables via an association (many-to-many) table. Non-vocabulary tables automatically use RID as the foreign key target, while vocabulary tables use their Name key.

Use with create_table() to create the association table in the catalog.

Parameters:

| Name | Type | Description | Default |

|---|---|---|---|

associates

|

list

|

Tables to associate. Each item can be: - A Table object - A (name, Table) tuple to customize the column name - A (name, nullok, Table) tuple for nullable references - A Key object for explicit key selection |

required |

metadata

|

list | None

|

Additional metadata columns or reference targets. |

None

|

table_name

|

str | None

|

Name for the association table. Auto-generated if omitted. |

None

|

comment

|

str | None

|

Comment for the association table. |

None

|

**kwargs

|

Additional arguments passed to Table.define_association. |

{}

|

Returns:

| Type | Description |

|---|---|

dict

|

Table definition dict suitable for |

Example::

# Associate Image with Subject (many-to-many)

image_table = ml.model.name_to_table("Image")

subject_table = ml.model.name_to_table("Subject")

assoc_def = ml.define_association(

associates=[image_table, subject_table],

comment="Links images to subjects",

)

ml.create_table(assoc_def)

Source code in src/deriva_ml/core/base.py

1302 1303 1304 1305 1306 1307 1308 1309 1310 1311 1312 1313 1314 1315 1316 1317 1318 1319 1320 1321 1322 1323 1324 1325 1326 1327 1328 1329 1330 1331 1332 1333 1334 1335 1336 1337 1338 1339 1340 1341 1342 1343 1344 1345 1346 1347 1348 1349 | |

delete_dataset

delete_dataset(

dataset: "Dataset",

recurse: bool = False,

) -> None

Soft-delete a dataset by marking it as deleted in the catalog.

Sets the Deleted flag on the dataset record. The dataset's data is

preserved but it will no longer appear in normal queries (e.g.,

find_datasets()). The dataset cannot be deleted if it is currently

nested inside a parent dataset.

Parameters:

| Name | Type | Description | Default |

|---|---|---|---|

dataset

|

Dataset

|

The dataset to delete. |

required |

recurse

|

bool

|

If True, also soft-delete all nested child datasets. If False (default), only this dataset is marked as deleted. |

False

|

Raises:

| Type | Description |

|---|---|

DerivaMLException

|

If the dataset RID is not a valid dataset, or if the dataset is nested inside a parent dataset. |

Example

ds = ml.lookup_dataset("1-ABC") # doctest: +SKIP ml.delete_dataset(ds, recurse=False) # doctest: +SKIP

Source code in src/deriva_ml/core/mixins/dataset.py

166 167 168 169 170 171 172 173 174 175 176 177 178 179 180 181 182 183 184 185 186 187 188 189 190 191 192 193 194 195 196 197 198 199 200 201 202 | |

delete_feature

delete_feature(

table: Table | str,

feature_name: str,

) -> bool

Removes a feature definition and its data.

Deletes the feature and its implementation table from the catalog. This operation cannot be undone and will remove all feature values associated with this feature.

Parameters:

| Name | Type | Description | Default |

|---|---|---|---|

table

|

Table | str

|

The table containing the feature, either as name or Table object. |

required |

feature_name

|

str

|

Name of the feature to delete. |

required |

Returns:

| Name | Type | Description |

|---|---|---|

bool |

bool

|

True if the feature was successfully deleted, False if it didn't exist. |

Raises:

| Type | Description |

|---|---|

DerivaMLException

|

If deletion fails due to constraints or permissions. |

Example

success = ml.delete_feature("samples", "obsolete_feature") # doctest: +SKIP print("Deleted" if success else "Not found")

Source code in src/deriva_ml/core/mixins/feature.py

240 241 242 243 244 245 246 247 248 249 250 251 252 253 254 255 256 257 258 259 260 261 262 263 264 265 266 267 268 | |

delete_term

delete_term(

table: str | Table, term_name: str

) -> None

Delete a term from a vocabulary table.

Removes a term from the vocabulary. The term must not be in use by any records in the catalog (e.g., no datasets using this dataset type, no assets using this asset type).

Parameters:

| Name | Type | Description | Default |

|---|---|---|---|

table

|

str | Table

|

Vocabulary table containing the term (name or Table object). |

required |

term_name

|

str

|

Primary name of the term to delete. |

required |

Raises:

| Type | Description |

|---|---|

DerivaMLInvalidTerm

|

If the term doesn't exist in the vocabulary. |

DerivaMLException

|

If the term is currently in use by other records. |

Example

ml.delete_term("Dataset_Type", "Obsolete_Type") # doctest: +SKIP

Source code in src/deriva_ml/core/mixins/vocabulary.py

388 389 390 391 392 393 394 395 396 397 398 399 400 401 402 403 404 405 406 407 408 409 410 411 412 413 414 415 416 417 418 419 420 421 422 423 424 425 426 427 428 429 430 | |

diff_schema

diff_schema() -> 'SchemaDiff'

Return the structural diff between the cached and live schemas.

Online mode only. Fetches the live catalog's /schema

payload, compares it against the cached copy with

:func:~deriva_ml.core.schema_diff._compute_diff, and returns

the result. The returned :class:SchemaDiff may be empty

(no drift) — callers should check diff.is_empty() rather

than truthiness.

Unlike :meth:pin_schema, this method never modifies the

cache and never logs a warning; it is a pure inspection

operation.

Returns:

| Name | Type | Description |

|---|---|---|

A |

'SchemaDiff'

|

class: |

Raises:

| Type | Description |

|---|---|

DerivaMLReadOnlyError

|

If called in offline mode. |

FileNotFoundError

|

If the workspace has no cache file. |

Source code in src/deriva_ml/core/base.py

672 673 674 675 676 677 678 679 680 681 682 683 684 685 686 687 688 689 690 691 692 693 694 695 696 697 698 699 700 | |

download_dataset_bag

download_dataset_bag(

dataset: DatasetSpec,

) -> "DatasetBag"

Downloads a dataset to the local filesystem.

Downloads a dataset specified by DatasetSpec to the local filesystem. If the catalog has s3_bucket configured and use_minid is enabled, the bag will be uploaded to S3 and registered with the MINID service.

Parameters:

| Name | Type | Description | Default |

|---|---|---|---|

dataset

|

DatasetSpec

|

Specification of the dataset to download, including version and materialization options. |

required |

Returns:

| Name | Type | Description |

|---|---|---|

DatasetBag |

'DatasetBag'

|

Object containing: - path: Local filesystem path to downloaded dataset - rid: Dataset's Resource Identifier - minid: Dataset's Minimal Viable Identifier (if MINID enabled) |

Note

MINID support requires s3_bucket to be configured when creating the DerivaML instance. The catalog's use_minid setting controls whether MINIDs are created.

Examples:

Download with default options: >>> spec = DatasetSpec(rid="1-abc123") # doctest: +SKIP >>> bag = ml.download_dataset_bag(dataset=spec) # doctest: +SKIP >>> print(f"Downloaded to {bag.path}") # doctest: +SKIP

Source code in src/deriva_ml/core/mixins/dataset.py

291 292 293 294 295 296 297 298 299 300 301 302 303 304 305 306 307 308 309 310 311 312 313 314 315 316 317 318 319 320 321 322 323 324 325 326 327 328 329 330 | |

download_dir

download_dir(

cached: bool = False,

) -> Path

Returns the appropriate download directory.

Provides the appropriate directory path for storing downloaded files, either in the cache or working directory.

Parameters:

| Name | Type | Description | Default |

|---|---|---|---|

cached

|

bool

|

If True, returns the cache directory path. If False, returns the working directory path. |

False

|

Returns:

| Name | Type | Description |

|---|---|---|

Path |

Path

|

Directory path where downloaded files should be stored. |

Example

cache_dir = ml.download_dir(cached=True) work_dir = ml.download_dir(cached=False)

Source code in src/deriva_ml/core/base.py

784 785 786 787 788 789 790 791 792 793 794 795 796 797 798 799 800 | |

estimate_bag_size

estimate_bag_size(

dataset: "DatasetSpec",

) -> dict[str, Any]

Estimate the size of a dataset bag before downloading.

Generates the same download specification used by download_dataset_bag, then runs COUNT and SUM(Length) queries against the snapshot catalog to preview what a download will contain and how large it will be.

Parameters:

| Name | Type | Description | Default |

|---|---|---|---|

dataset

|

'DatasetSpec'

|

Specification of the dataset to estimate, including version and optional exclude_tables. |

required |

Returns:

| Type | Description |

|---|---|

dict[str, Any]

|

dict with keys: - tables: dict mapping table name to {row_count, is_asset, asset_bytes} - total_rows: total row count across all tables - total_asset_bytes: total size of asset files in bytes - total_asset_size: human-readable size string (e.g., "1.2 GB") |

Source code in src/deriva_ml/core/mixins/dataset.py

332 333 334 335 336 337 338 339 340 341 342 343 344 345 346 347 348 349 350 351 352 353 354 355 356 357 358 359 | |

estimate_denormalized_size

estimate_denormalized_size(

include_tables: list[str],

) -> dict[str, Any]

Return schema shape + catalog-wide size estimates for a denormalized table.

This is the catalog-wide analog of

:meth:Dataset.describe_denormalized. It asks "if I were to

denormalize these tables across the entire catalog (not scoped

to any specific dataset), what would the result look like and

how big would it be?" Useful for rough size estimation before

committing to a bag export.

The return shape is aligned with :meth:estimate_bag_size and

is NOT the same as the dataset-scoped 12-key plan dict from

:meth:Dataset.describe_denormalized (spec §5). Do not confuse

the two.

Parameters:

| Name | Type | Description | Default |

|---|---|---|---|

include_tables

|

list[str]

|

List of table names to include in the join. |

required |

Returns:

| Type | Description |

|---|---|

dict[str, Any]

|

dict with these keys: |

dict[str, Any]

|

|

dict[str, Any]

|

|

dict[str, Any]

|

|

dict[str, Any]

|

|

dict[str, Any]

|

|

dict[str, Any]

|

|

Example::

info = ml.estimate_denormalized_size(["Image", "Subject"])

print(f"{info['total_rows']} rows across "

f"{len(info['tables'])} tables, "

f"{info['total_asset_size']} of assets")

See Also

Dataset.describe_denormalized: Dataset-scoped planning dict. Denormalizer.describe: Full dataset-scoped plan with ambiguity reporting. estimate_bag_size: Bag-level size estimation.

Source code in src/deriva_ml/core/mixins/dataset.py

390 391 392 393 394 395 396 397 398 399 400 401 402 403 404 405 406 407 408 409 410 411 412 413 414 415 416 417 418 419 420 421 422 423 424 425 426 427 428 429 430 431 432 433 434 435 436 437 438 439 440 441 442 443 444 445 446 447 448 449 450 451 452 453 454 455 456 457 458 459 460 461 462 463 464 465 466 467 468 469 470 471 472 473 474 475 476 477 478 479 480 481 482 483 484 485 486 487 488 489 490 491 492 493 494 495 496 497 498 499 500 501 502 503 504 | |

feature_record_class

feature_record_class(

table: str | Table,

feature_name: str,

) -> type[FeatureRecord]

Returns a dynamically generated Pydantic model class for creating feature records.

Each feature has a unique set of columns based on its definition (terms, assets, metadata). This method returns a Pydantic class with fields corresponding to those columns, providing:

- Type validation: Values are validated against expected types (str, int, float, Path)